How bad is ChatGPT at using your Apple Health data?

Apple Health can now be connected to ChatGPT, but don’t do it.

We warned you. In a disappointing and totally predictable way, giving ChatGPT access to Apple Health data has been shown to be as bad an idea as we’ve already said.

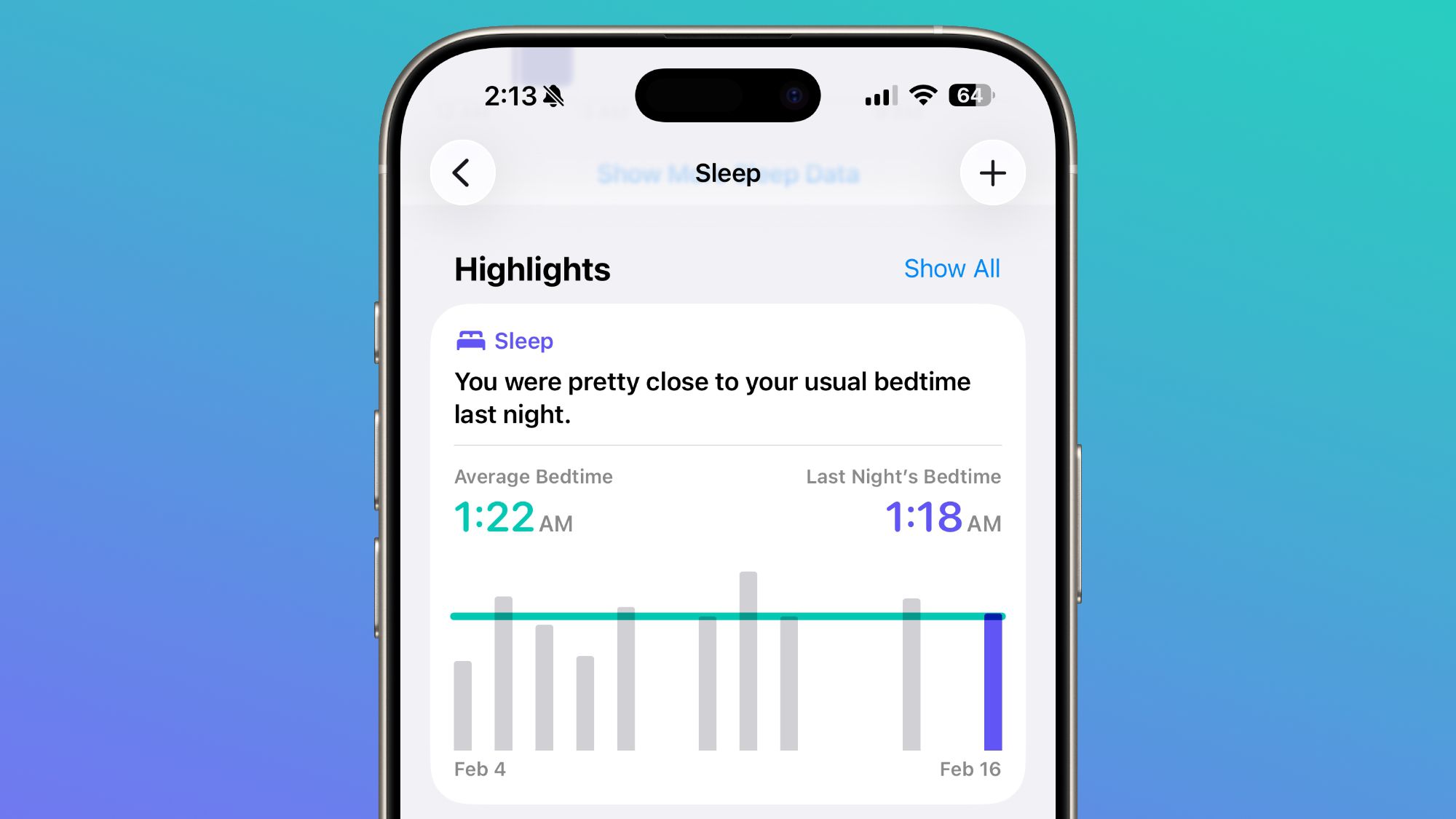

Since January 7, 2026, it’s been possible to connect ChatGPT to Apple Health. This is similar to the way that ChatGPT can also connect to apps like Notion, app-making tools like Xcode, and so on.

Except in all of those cases, when — not if — ChatGPT does something wrong, it’s mostly just annoying. You might not spot it right away with an app like Notion, but Xcode will probably fail to compile so you get error messages.

With health, there are no such error messages. AppleInsider is really clear on this — do not connect ChatGPT to Apple Health. Not now when you have to apply to test it out, and not when it’s rolled out publicly like a finished and working tool.

There is of course the potential privacy issue that you are surrendering your most personal data to an AI company, and trusting its word that it won’t sell that on. But as a new report in the Washington Post now shows, it’s not as if you get anything useful back for it.

Columnist Geoffrey A. Fowler says that he connected the two because he had something in the order of a decade of Apple Health data. OpenAI says its ChatGPT can analyze trends in this and keep you informed about your overall health.

That decade of Apple Health data amounted to 6 million heartbeat measurements and 29 million steps. ChatGPT considered all of these, and when asked to grade Fowler’s health from A down to F.

He got an immediate F.

It took Fowler’s doctor to calm him down. Not only did the data not support an F, said the doctor, Fowler is actually such low-risk for heart attacks that his insurance probably wouldn’t pay for a test.

Then AI medicine exporter Eric Topol, a cardiologist with the Scripps Research Institute, also looked at the F. “It’s baseless,” he reportedly said. “[ChatGPT] is not ready for any medical advice.”

You are not remotely shocked. But you read AppleInsider.

True, you do have to be using Apple Health to have any data to share. So you have to be at least that little bit aware of your health and of recording data.

But AI is sold as this all-knowing and, crucially, all-understanding god. OpenAI says over 230 million users ask ChatGPT health questions every week, so this is a huge market that is going to be badly served when access to the health connection fully goes public.

“People that do this are going to get really spooked about their health,” said Topol. “It could also go the other way and give people who are unhealthy a false sense that everything they’re doing is great.”

Why it goes so wrong

Fowler also tried a similar feature in Anthropic’s Claude and got a D grade. That’s of course better for your state of mind, but there’s no way to know it’s actually more accurate.

Apple does not share your Health data. But if you give ChatGPT permission to connect, you are sharing that data yourself.

Since AI does not actually reason and instead it solely compares patterns, both of these bots made assumptions. In the case of ChatGPT, for one thing, it was thrown by the changes in heart rate every time Fowler upgrade to a new Apple Watch.

It also ignored most of the specific data that Apple Watch records, in favor of one that Apple says is an estimate. That’s Cardio Fitness, or VO2 max, which tracks the maximum amount of oxygen your body can take in.

Reportedly, Apple says that this is an estimate, yes, but a good one. Reportedly other sources say its estimates tend to be too low.

AI can make mistakes

All AI firms now say somewhere that their service can make mistakes, so we should check the results. That’s always a worthless disclaimer for us, and presumably only useful to the firms the next time they get sued.

In this case, checking the results can actually be expensive. It could cost you and your insurance, plus it could cost doctors time they would spend on patients who really need their care.

But one thing you can always do — and always should do — is to get the AI chatbot to answer again. “Prove it,” you can type.

Fowler tried just asking ChatGPT exactly the same question multiple times. He did get an F again, but only sometimes.

In all, accessing the same data and being asked the same question, ChatGPT returned every grade from F up to B.

Maybe ChatGPT was responding to how it was making Fowler’s heart rate soar every time it told him he was that dangerously ill.

Importantly, Apple has said that it does not work directly with either ChatGPT or Claude on this topic. It is also working to produce its own Health AI assistant in future.

Hopefully that one will be more accurate. But at least you know Apple won’t share your health data with anyone.

link